Introduction

In this article I will show you how to solve some common problems that you might encounter when you use stored procedures as the source in a Data Flow Task. These particular problems arise from the fact that stored procedures do not expose metadata. Problems also result from the common practice of letting the NOCOUNT setting in its default value (OFF). The root causes have been explained previously here and here. Since I have nothing to add to the matter, I recommend you to read the references at the end of this article.

Requirements

This article requires the following items:

- SQL Server 2008 R2

- BIDS for SSIS development

- SQL Server 2012 or 2014 and SSDT to simulate a migration scenario.

- PowerShell to generate metadata statements from the stored procedure's returned resultset.

Getting Started

We are going to create four Stored Procedures for testing purposes. Three of them have the same signature and return the same resultset. The fourth one is slightly different since it returns a different resultset depending on an input parameter. You'll notice that I haven't set the clause NOCOUNT to ON. This is to emulate the behavior of "problematic" procedures.

All procedures will be created on tempdb to avoid disruptions in your system.

USE tempdb;

GO

IF OBJECT_ID(N'dbo.SomeDummyTable', N'U') IS NOT NULL

DROP TABLE dbo.SomeDummyTable;

GO

IF OBJECT_ID(N'dbo.ProcedureWithDML', N'P') IS NOT NULL

DROP PROCEDURE dbo.ProcedureWithDML;

GO

IF OBJECT_ID(N'dbo.ProcedureDynamicSql', N'P') IS NOT NULL

DROP PROCEDURE dbo.ProcedureDynamicSql;

GO

IF OBJECT_ID(N'dbo.ProcedureTempTable', N'P') IS NOT NULL

DROP PROCEDURE dbo.ProcedureTempTable;

GO

IF OBJECT_ID(N'dbo.ProcedureMultipleResultSets', N'P') IS NOT NULL

DROP PROCEDURE dbo.ProcedureMultipleResultSets;

GO

CREATE TABLE dbo.SomeDummyTable( dummy INTEGER );

GO

CREATE PROCEDURE dbo.ProcedureWithDML

@StartIndex AS INTEGER,

@BatchSize AS SMALLINT

AS

BEGIN

--SET NOCOUNT ON; Commented deliberately to produce errors

DELETE dbo.SomeDummyTable

WHERE 1 = 0;

SELECT CAST(number AS SMALLINT) AS number,

CAST(high - 1 AS TINYINT) AS high,

CAST(low - 1 AS TINYINT) AS low

FROM master.dbo.spt_values

WHERE [type] = 'P'

AND number >= @StartIndex

AND number < @StartIndex + @BatchSize;

END;

GO

CREATE PROCEDURE dbo.ProcedureDynamicSql

@StartIndex AS INTEGER,

@BatchSize AS SMALLINT

AS

BEGIN

--SET NOCOUNT ON; Commented deliberately to produce errors

DECLARE @SqlCmmd AS NVARCHAR(MAX) = N'

SELECT CAST(number AS SMALLINT) AS number,

CAST(high - 1 AS TINYINT) AS high,

CAST(low - 1 AS TINYINT) AS low

FROM master.dbo.spt_values

WHERE [type] = ''P''

AND number >= @StartIndex

AND number < @StartIndex + @BatchSize;';

DECLARE @Params AS NVARCHAR(500) = N'@StartIndex AS INTEGER, @BatchSize AS SMALLINT';

EXECUTE sp_executesql

@statement = @SqlCmmd,

@params = @Params,

@StartIndex = @StartIndex,

@BatchSize = @BatchSize;

END;

GO

CREATE PROCEDURE dbo.ProcedureTempTable

@StartIndex AS INTEGER,

@BatchSize AS SMALLINT

AS

BEGIN

SELECT CAST(number AS SMALLINT) AS number,

CAST(high - 1 AS TINYINT) AS high,

CAST(low - 1 AS TINYINT) AS low

INTO #Result

FROM master.dbo.spt_values

WHERE [type] = 'P'

AND number >= @StartIndex

AND number < @StartIndex + @BatchSize;

SELECT *

FROM #Result;

END;

GO

CREATE PROCEDURE dbo.ProcedureMultipleResultSets

@StartIndex AS INTEGER,

@BatchSize AS SMALLINT,

@Type AS TINYINT = 0

AS

BEGIN

IF @Type = 0

BEGIN

SELECT CAST(number AS SMALLINT) AS number,

CAST(high - 1 AS TINYINT) AS high,

CAST(low - 1 AS TINYINT) AS low

FROM master.dbo.spt_values

WHERE [type] = 'P'

AND number >= @StartIndex

AND number < @StartIndex + @BatchSize;

END

ELSE

BEGIN

--New columns and different order.

SELECT CAST(high - 1 AS TINYINT) AS high,

CAST(low - 1 AS TINYINT) AS low, --> Just for fun change to SMALLINT and see what happens

CAST(number AS SMALLINT) AS number,

CAST(SYSUTCDATETIME() AS DATETIME2(0)) AS [timestamp],

NEWID() AS [newid]

FROM master.dbo.spt_values

WHERE [type] = 'P'

AND number >= @StartIndex

AND number < @StartIndex + @BatchSize;

END;

END;

GO

Now create a new SSIS project in BIDS. Add two connections, one of type OLEDB and one of type ADO.NET and configure them for your test environment.

Add a Sequence Container and inside it put two Data Flow Tasks.

Configure the first Data Flow Task to execute the procedure "ProcedureWithDML" using the OLEDB connection. We will use a Row Count transformation as a dummy destination, so we need to add an integer variable, too.

Repeat the process for the second Data Flow using now the ADO.NET connection.

Copy and paste the entire Sequence Container, and configure the Data Flows to execute now the procedure "ProcedureDynamicSql". Up to this point, you should have found no issues.

Execute the package now. You'll see something similar to the following image:

The OLE BD connection retrieves the metadata differently than ADO.NET (you can verify this with a SQL Server Profiler session). But the real problem is related to the delete statement at the beginning of the procedure. It returns a DONE_IN_PROC message to the OLEDB connection, and the connection interprets the message as an empty resultset, hence the error.

So, how do we solve this problem? Just write "SET NOCOUNT ON;" before the EXECUTE line.

Execute the package again. No errors should occur

More complications

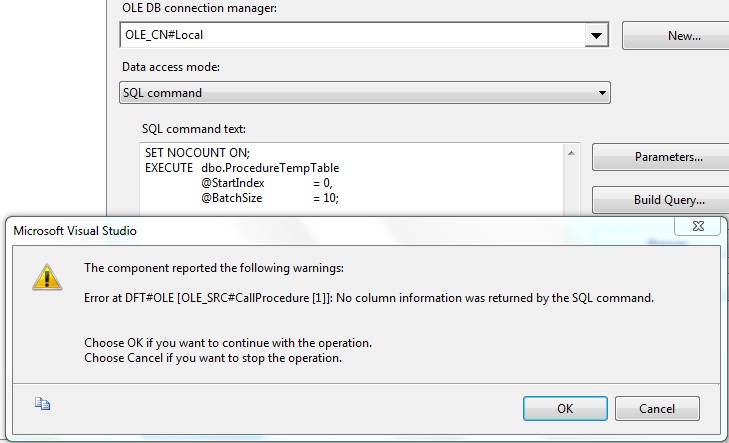

Configure now the procedure "ProcedureTempTable". To save time, just copy one of the existing Sequence Containers, then edit the “SQL command text”. You should see the following warning:

If you hit the Preview button, the procedure works as expected, yet you have no usable metadata to configure the source.

Maybe the ADO.NET source would do the trick:

What now? We have to provide usable metadata to the source components. How can we obtain it? For this trivial procedures shouldn’t be a complicated task to track down every column in the resultset with their respective data types.

But since I have to deal with fairly complex procedures frequently, I build a PowerShell script to automate this process.

# Author: Adán Bucio

# Date: 2014-10-10

# Purpose: Generate usable metadata for SSIS data flow sources from a Sql Server Script or procedure.

# Disclaimer: This script is provided as is without any support.

# Instructions:

# 1. Replace $QueryOrProc variable with your script or procedure

# 2. Replace $MyEnv keys with your own SQL Server host and database

$ErrorActionPreference = 'Stop'

$QueryOrProc = @"

--Put your code here. If you are passing a procedure

--set param values to retrieve just a small set.

EXECUTE dbo.ProcedureMultipleResultSets

@StartIndex = 0,

@BatchSize = 10,

@Type = 1;

"@

$MyEnv = @{

host = '(local)';

database = 'tempdb';

version = 2008; # Valid values: 2008, 2012, 2014

appname = 'PSMetadataResolver';

}

$cnnstr = New-Object -TypeName System.Data.SqlClient.SqlConnectionStringBuilder

$cnnstr['Data Source'] = $myenv.host

$cnnstr['Initial Catalog'] = $myenv.database

$cnnstr['Integrated Security'] = 'SSPI'

$cnnstr['Application Name'] = $myenv.appname

[System.Data.DataTable] $metadata = $null

[Data.SqlClient.SqlConnection] $conn = $null

Try {

$conn = New-Object System.Data.SqlClient.SqlConnection($cnnstr.ToString())

$cmd = New-Object System.Data.SqlClient.SqlCommand($QueryOrProc, $conn)

$cmd.CommandType = [System.Data.CommandType]::Text

$conn.Open()

Try {

#$reader = $cmd.ExecuteReader([System.Data.CommandBehavior]::SchemaOnly)

#I would usually invoke ExecuteReader with [System.Data.CommandBehavior]::SchemaOnly

#to only get the metadata (for queries and well formed procedures)

#but since we know this procedures have issues, we do a real execution

$reader = $cmd.ExecuteReader()

$metadata = $reader.GetSchemaTable();

}

Catch [System.Data.SqlClient.SqlException] {

<##No metadata --> Execute procedure

If($_.Exception.Number -eq 208) { # Invalid object name 'xxxxxx'.

$reader = $cmd.ExecuteReader()

$metadata = $reader.GetSchemaTable()

}

Else { Throw $_.Exception }#>

}

}

Finally {

If($conn -ne $null) { $conn.Dispose() }

}

$strbuilder = New-Object System.Text.StringBuilder

If($MyEnv.version -le 2008) {

[void]$strbuilder.AppendLine("IF 1 = 0");

[void]$strbuilder.AppendLine(" SELECT");

}

Else{

[void]$strbuilder.AppendLine("WITH RESULT SETS ((");

}

$columnTemplate = If($MyEnv.version -le 2008) {

" CAST(NULL AS {0}) AS [{1}]{2}"

}

Else {

" [{1}] {0} NULL{2}"

}

$metadata |% {

$lineterminator = If($_.ColumnOrdinal -ne $metadata.Rows.Count - 1) { "," }

Else { If($MyEnv.version -le 2008) { ";" } Else { "));" } }

If( "char", "varchar", "nchar", "nvarchar", "binary", "varbinary" -contains $_.DataTypeName ) {

$length = If( $_.ColumnSize -eq 2147483647) { 'max' } else { "$($_.ColumnSize)" }

[void]$strbuilder.AppendFormat($columnTemplate, `

"$($_.DataTypeName)($($length)))", $_.ColumnName, $lineterminator)

}

ElseIf( "time", "datetime2", "datetimeoffset" -contains $_.DataTypeName ) {

[void]$strbuilder.AppendFormat($columnTemplate, `

"$($_.DataTypeName)($($_.NumericScale))", $_.ColumnName, $lineterminator)

}

ElseIf( $_.DataTypeName -eq "decimal" ) {

[void]$strbuilder.AppendFormat($columnTemplate, `

"$($_.DataTypeName)($($_.NumericPrecision), $($_.NumericScale))", $_.ColumnName, $lineterminator)

}

Else {

[void]$strbuilder.AppendFormat($columnTemplate, $_.DataTypeName, $_.ColumnName, $lineterminator)

}

[void]$strbuilder.AppendLine()

}

$strbuilder.ToString()

Copy the generated snippet and paste it in the “SQL command text” of your OLE DB Source

Before executing the package let's configure the last procedure. For this case we are interested in retrieving the second resultset (@Type = 1). If we hit the Preview button we see the expected resultset:

However, the OLE DB Source takes the first resultset defined in the procedure body, thus using the “wrong” metadata:

Imagine for a moment that some careless developer hit the “OK” button. What would have happened? Well, it depends on whether you are using OLE DB or ADO.NET and how much the resultsets differ from each other.

When OLE DB is used, the columns in the resultset are matched with the metadata columns by position, thus if a value could be converted to the type on that position, no error would occur.

On the other hand, when ADO.NET is used, the columns in the resultset are matched with the metadata by name without conversion. Because in this example the second resultset contains all the columns of the first one with the same data types, no error would occur either.

If we proceed with this configuration and execute the package we would see something like this (with the help of a pair of Data Viewers):

So, be extra careful when you have to map this type of procedures. In the best case scenario an exception will be thrown. In the worst case you’ll end up with incorrect data in your columns.

Let's use again the PowerShell Script to get the correct metadata.

Configure the remaining OLEDB and ADO.NET sources.

Execute the package now.

Upgrade the solution

Now that everything works smoothly, let’s upgrade our package to SQL Server 2014. Create a new project on SSDT and Import the package.

Execute the package

Perfect! Everything is working just fine. But wait! Our connections still reference the SQL Server 2008 instance. So let's upgrade the database objects to SQL Server 2014 too. Go on and execute the setup script on your SQL Server 2014 instance.

Now we'll update our connection managers.

As soon as we change the OLE DB Connection, we see that three of our OLE DB dependent Data Flows aren't doing well. If we try to refresh the metadata we'll see different errors but the one that leads to a solution is this one:

It seems that starting with SQL Server 2012, the OLE DB Provider changed the way it retrieves metadata. Let's follow the error message suggestion to use the clause “WITH RESULT SETS” . You can generate the appropriate clause using the PowerShell script provided with this article.

Just paste your stored procedure invocation, change the version key to 2012 or 2014 and execute.

Copy the output to the OLE DB Source and refresh the metadata. Repeat for the other OLE DB Sources

Execute the package, everything should work well now.

Conclusion

If you have this kind of problems you should refactor your procedures or even better, expose your data via table valued functions. But for the countless times I have heard that changing the code is not feasible for whatever reasons, I decided to automate the metadata generation and share with you these techniques.

It appears that ADO.NET is a better choice when dealing with this type of procedures, but I wouldn’t recommend you to choose this type of connection by default. It masks some bad programming practices that sooner or later you’ll have to deal with.

References

- http://sqlblog.com/blogs/adam_machanic/archive/2006/07/12/stored-procedures-are-not-parameterized-views.aspx

- http://consultingblogs.emc.com/jamiethomson/archive/2006/12/20/SSIS_3A00_-Using-stored-procedures-inside-an-OLE-DB-Source-component.aspx

- http://blogs.msdn.com/b/psssql/archive/2013/07/23/when-does-sp-prepare-return-metadata.aspx

- http://msdn.microsoft.com/en-us/library/ms189837.aspx

- http://technet.microsoft.com/en-us/library/ee176961.aspx

- http://msdn.microsoft.com/en-us/library/ms188332.aspx